wandb

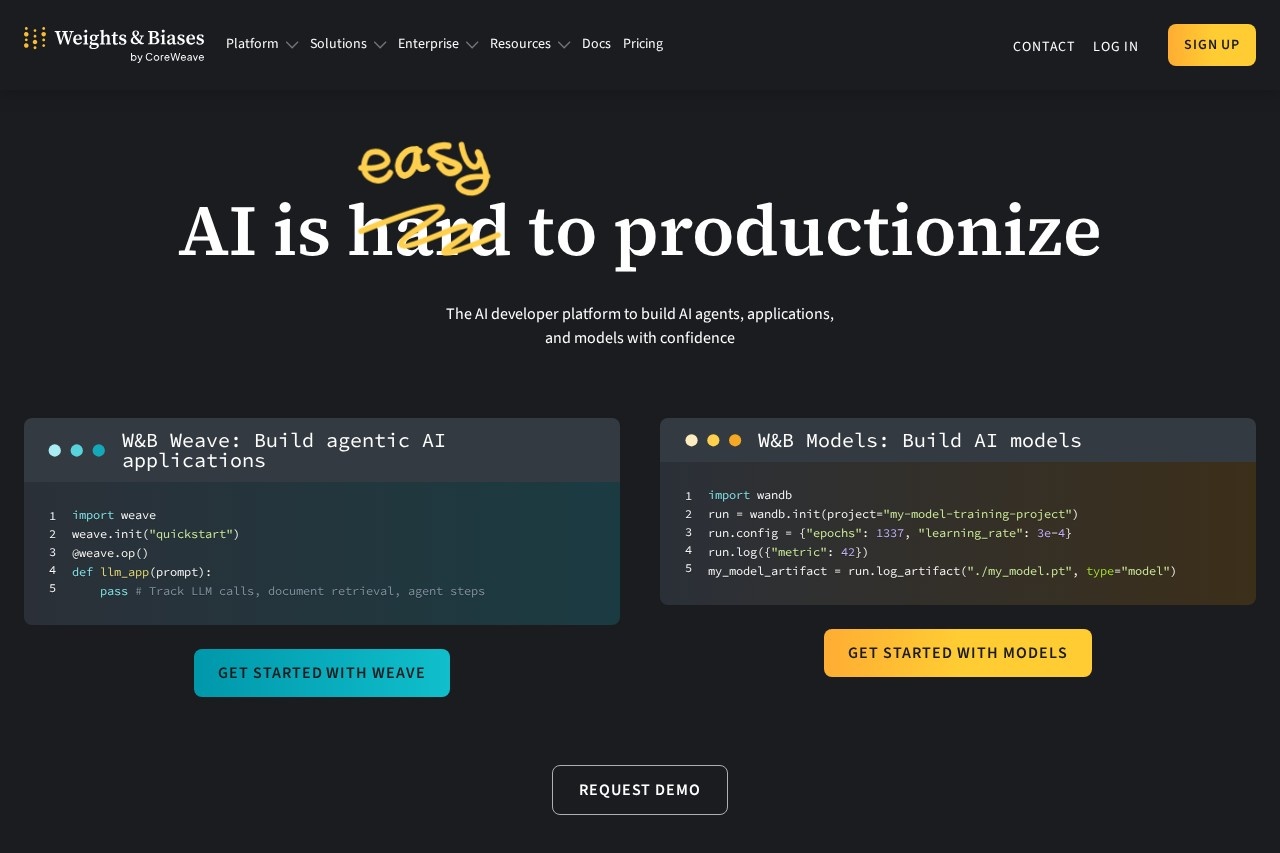

wandb by Weights & Biases is a platform for machine learning experiment tracking, model visualization, and collaboration, helping teams manage and optimize their ML workflows.

Weights & Biases (wandb) Review: Streamlining the Machine Learning Lifecycle

What is wandb?

Weights & Biases (wandb) is a dedicated platform built to bring order to the often chaotic process of machine learning development. At its core, it’s a system of record for your ML projects. It goes beyond simple logging to provide a centralized hub for experiment tracking, model visualization, and team collaboration. In practice, this means replacing scattered spreadsheets, local log files, and ad-hoc scripts with a unified, cloud-based dashboard. The platform is designed to integrate directly into your existing ML code, capturing metrics, hyperparameters, output files, and system metrics automatically. This creates a searchable, reproducible history of every training run, model version, and result, which is fundamental for scientific rigor and efficient iteration in modern AI teams.

Application Scenarios

wandb shines in scenarios where tracking complexity and collaboration are paramount. It is exceptionally useful for hyperparameter tuning and optimization, where teams need to compare hundreds of runs to understand the impact of different configurations on model performance. In research and academic settings, it provides the necessary audit trail to ensure experiments are reproducible, making it easier to validate findings and write papers. For production ML engineering, it acts as a version control system for models, linking specific model artifacts back to the exact code, data, and parameters that created them. This is critical for debugging model regression and managing staged rollouts. Furthermore, in cross-functional team projects, it serves as a shared source of truth where data scientists, ML engineers, and even business stakeholders can monitor progress, comment on results, and review model performance without needing direct access to the codebase.

Main Features

The platform’s functionality is organized around a few powerful, interconnected features. Experiment Tracking is the foundation. With minimal code integration, it logs metrics like loss and accuracy, hyperparameters, and system resource consumption (GPU/CPU usage) in real-time to a cloud dashboard. This is complemented by powerful Visualization Tools that automatically generate charts and graphs from logged data, allowing for intuitive comparison between runs.Model Versioning is seamlessly integrated, enabling users to save and catalog model checkpoints directly to the wandb cloud, complete with metadata. The Collaborative Dashboard is a live reporting tool where teams can share findings, group related runs into projects, and use commenting features to discuss results. For deeper analysis, the platform offers Interactive Tables (Artifact Tables) to query, filter, and sort through model predictions, validation datasets, and evaluation results. It also provides tools for Dataset Versioning and Tracking, helping to maintain the crucial link between specific data slices and the models trained on them.

Target Users

wandb is built for professionals and teams actively building machine learning systems. Its primary users are Machine Learning Researchers and Data Scientists who need to rigorously track experiments and iterate quickly. ML Engineers and MLOps practitioners leverage it to bring reproducibility and oversight to the model development lifecycle, bridging the gap between research and production. Academic research teams and students find it invaluable for managing group projects and ensuring the reproducibility required for scientific publication. Finally, tech leads and engineering managers in AI-driven companies use the collaborative dashboards to monitor team progress, review key results, and coordinate complex projects without getting bogged down in the raw code.

How to use wandb?

Getting started with wandb is designed to be straightforward. First, you create an account on their website and set up a new project. Integration into your workflow happens through their lightweight Python library. Typically, you initialize a run with a few lines of code at the start of your training script, defining the project name and optional configuration. From there, you can log metrics, hyperparameters, and media (like images or plots) by simply calling functions like wandb.log() within your training loop. The library automatically handles syncing this data to your personal cloud dashboard.

You can then open the wandb web application to view live-updating charts of your metrics, compare different runs side-by-side, and organize runs into groups for systematic comparison. For team use, you invite collaborators to projects, where they can view, comment, and fork existing runs. More advanced usage involves logging model artifacts (like trained model files) and datasets, using sweeps for automated hyperparameter tuning, and leveraging the API to query your logged data programmatically for custom reporting. The platform supports all major ML frameworks like PyTorch, TensorFlow, and scikit-learn through its open and flexible library.

Frequently Asked Questions

What is wandb?

How does wandb track experiments?

Is wandb free to use?

What types of visualizations does wandb provide?

Can wandb be used for team collaboration?

Which machine learning frameworks does wandb support?

wandb - AI Tool Detail

wandb by Weights & Biases is a platform for machine learning experiment tracking, model visualization, and collaboration, helping teams manage and optimize their ML workflows.

Category:Training Deployment Tool

Visit Link:https://wandb.ai/

Tags:machine learning、experiment tracking、model visualization、collaboration、workflow optimization