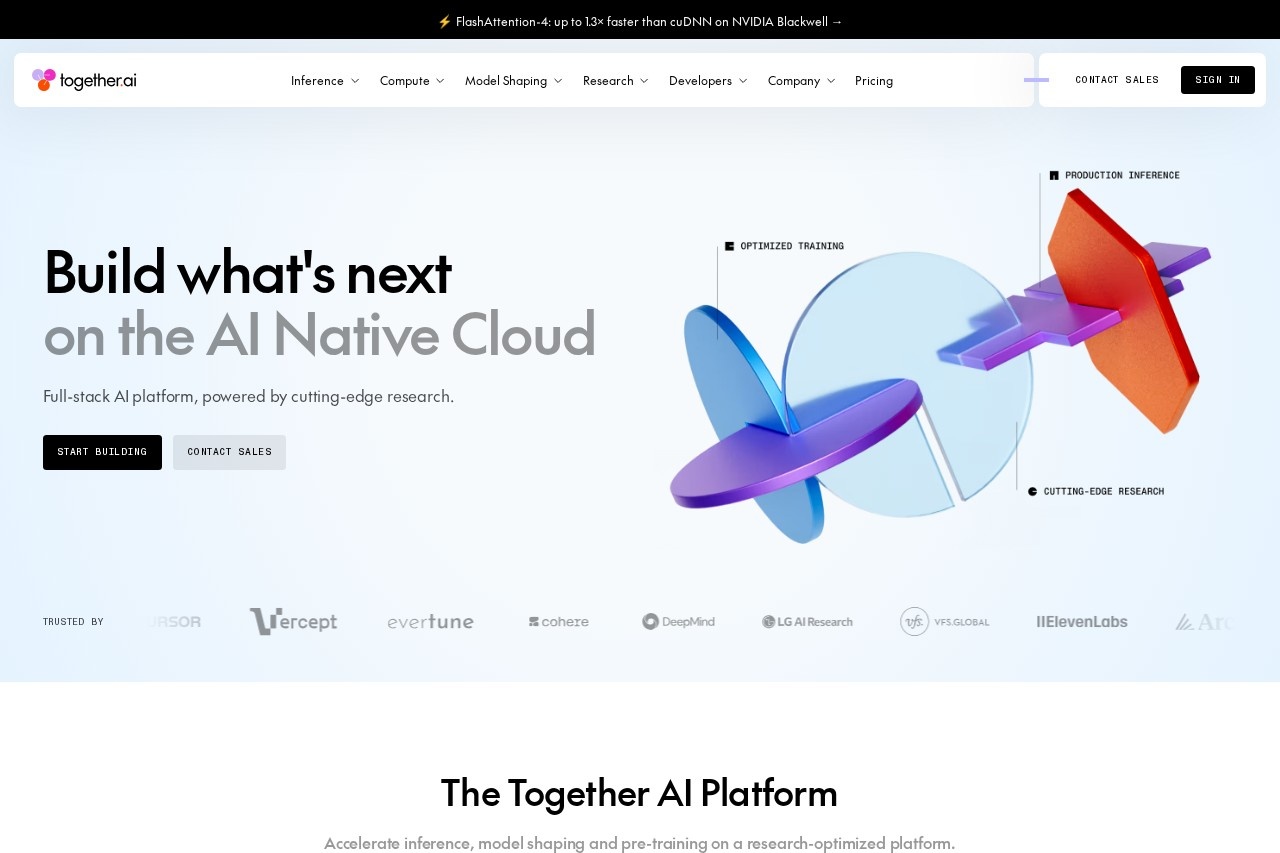

Together AI

Together AI provides a cloud platform for developers to build, train, and deploy open-source generative AI models, including large language models and image generation, with high-performance inference

What is Together AI?

Together AI is a full-stack AI cloud platform that enables developers to build, train, and deploy open-source generative AI models, including large language models and image generation. It offers high-performance inference, model shaping, and pre-training capabilities on a research-optimized infrastructure. The platform powers the entire AI development journey—from experimentation to massive scale—without requiring users to manage their own infrastructure. It is trusted by enterprise teams and backed by cutting-edge research.Application scenarios

Serverless inference

Run open-source models on demand with no infrastructure management or long-term commitments.

Batch inference

Process massive workloads asynchronously, scaling to 30 billion tokens per model.

Dedicated model inference

Deploy models on dedicated infrastructure for speed, control, and cost efficiency.

Dedicated container inference

Deploy video, audio, and image models on GPU infrastructure optimized for generative media workloads.

Fine-tuning

Fine-tune open-source models for production workloads to improve accuracy, reduce hallucinations, and control behavior.

Code sandboxing

Set up secure, fast code sandboxes for AI apps and agents at scale.

Research acceleration

Accelerate reinforcement learning rollouts by up to 50% with distribution-aware speculative decoding.

Core Features

Faster inference

Achieve up to 2x faster inference powered by cutting-edge research.

Lower cost

Reduce costs by up to 60% with workload-specific optimization.

Faster pre-training

Speed up pre-training by up to 90% using the Together Kernel Collection.

Full-stack cloud

Power every step of AI development—from experimentation to massive scale—with inference, compute, model shaping, and storage.

Managed storage

High-performance object storage and parallel filesystems optimized for AI workloads with zero egress fees.

Accelerated compute

Scale from self-serve instant clusters to thousands of GPUs, all optimized for better performance.

Sandbox

Use fast, secure code sandboxes at scale for full-scale development environments.

Fine-tuning

Fine-tune open-source models without managing training infrastructure, using the latest research techniques.

Research-backed features

Foundational systems research for production AI, including distribution-aware speculative decoding and stable looped models.

Target users

- AI developers and engineers: Build, train, and deploy generative AI models without managing infrastructure.

- Machine learning researchers: Access a research-optimized platform with cutting-edge inference and training capabilities.

- Enterprise teams: Deploy models on dedicated infrastructure for speed, control, and cost efficiency.

- Startups and scale-ups: Scale from self-serve clusters to thousands of GPUs as needed.

- Media and content creators: Deploy video, audio, and image models with performance acceleration.

How to use Together AI?

- Visit the Together AI website and click "Start building" or "Contact Sales" to get started.

- Choose your deployment option: serverless inference, batch inference, dedicated model inference, or dedicated container inference.

- For serverless inference, run open-source models on demand with no infrastructure management.

- For fine-tuning, use the platform's tools to fine-tune open-source models for production workloads.

- Use the sandbox feature to set up secure code sandboxes for AI apps and agents.

- Scale compute from self-serve instant clusters to thousands of GPUs as needed.

Effect review

The platform delivers on its promise of faster inference (up to 2x) and lower costs (up to 60%) through workload-specific optimization. Its full-stack approach—covering inference, compute, model shaping, and storage—makes it a comprehensive solution for teams at any stage of AI development. The inclusion of research-backed features like distribution-aware speculative decoding and stable looped models adds credibility for technical users. While the website does not provide user testimonials or specific quality metrics, the platform's focus on open-source models and production-ready infrastructure positions it as a strong choice for developers seeking flexibility and performance without vendor lock-in.Frequently Asked Questions

What is Together AI?

What models are available on Together AI?

Does Together AI provide GPU infrastructure for training?

How does Together AI ensure low-latency inference?

Is Together AI suitable for production deployments?

Together AI - AI Tool Detail

Together AI provides a cloud platform for developers to build, train, and deploy open-source generative AI models, including large language models and image generation, with high-performance inference

Category:Large Model Platform

Visit Link:https://together.ai/

Tags:open-source AI、cloud platform、generative AI、model deployment、high-performance inference