Multimodal2026-04-16 WIRED AI

WIRED AI

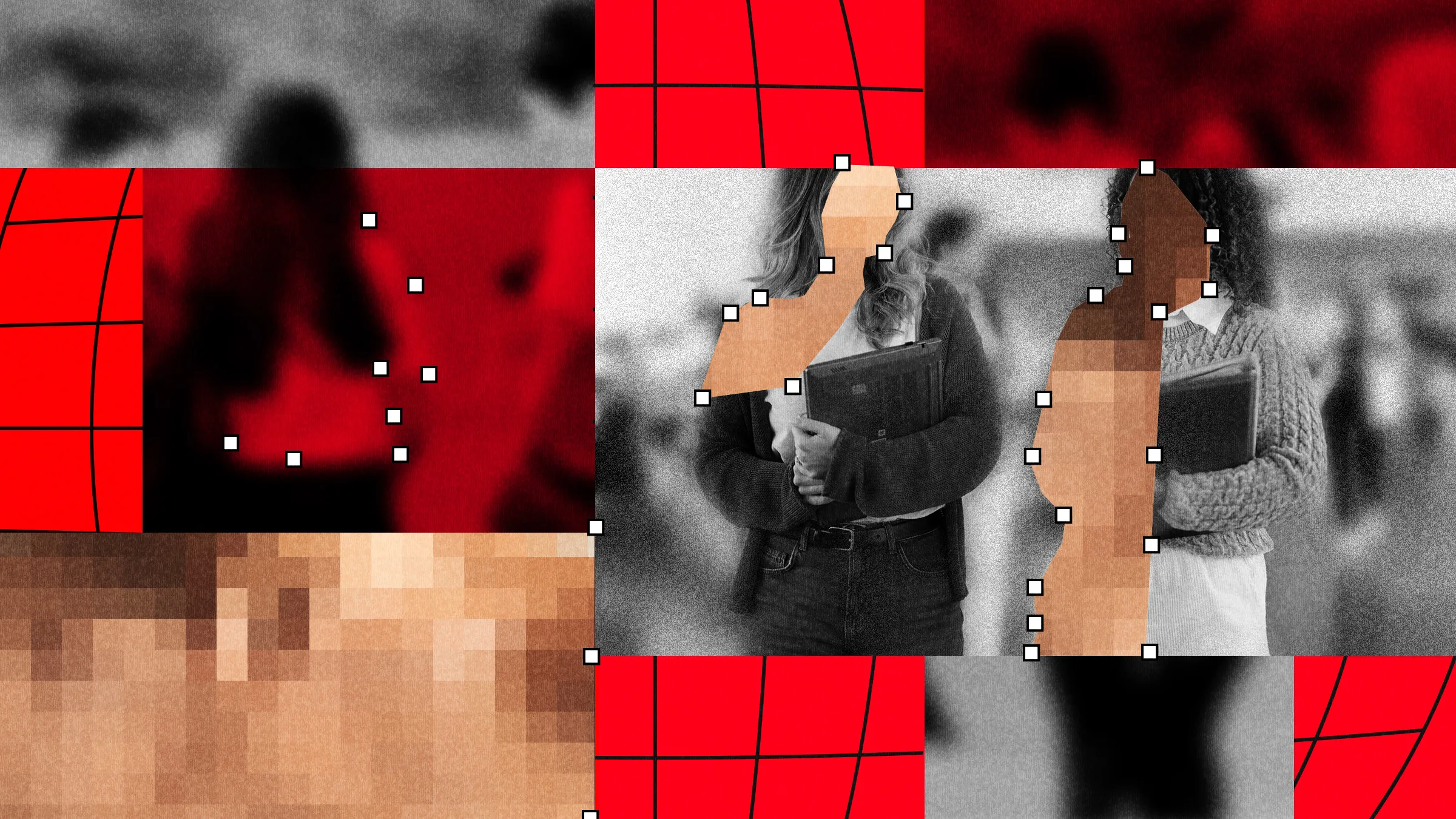

Deepfake Nude Crisis Impacts Hundreds of Students Globally

A disturbing investigation has revealed that the scourge of AI-generated deepfake nude imagery is a widespread global crisis affecting hundreds of students, not a series of isolated incidents. The analysis, conducted by WIRED and Indicator, found that nearly 90 schools and approximately 600 students across multiple countries have been victimized by this technology, which is being weaponized for harassment and bullying.

The report paints a picture of a rapidly growing problem with no signs of slowing down. Perpetrators, often fellow students, are using readily accessible AI tools to create non-consensual fake nude images of their peers. This digital abuse inflicts severe psychological trauma on victims, damaging reputations and creating an environment of fear and violation within school communities.

The scale and ease with which this harassment can now be carried out call for urgent and multifaceted responses. Schools, tech platforms, and lawmakers are struggling to keep pace. The crisis underscores the immediate need for better digital literacy education, robust reporting mechanisms on social platforms, and potentially new legal frameworks to address this specific form of image-based sexual abuse. As the technology to create convincing forgeries becomes even more accessible, the window for effective intervention is narrowing, demanding coordinated action from society to protect vulnerable individuals from this new frontier of harm.